'Data driven' organizations are the least prepared for AI

Hi there,

Welcome back to Untangled. It’s written by me, Charley Johnson, and supported by members like you. This week, a provocation: the organizations most confident in their data are probably the least prepared for what AI actually exposes.

As always, please send me feedback on today’s post by replying to this email. I read and respond to every note.

On to the show!

🏡 Untangled HQ

This Week:

Stewarding Complexity: Earlier this week, we tackled how to shift from control to stewardship. Next up? How to exercise agency in command and control systems.

Coming Up:

Systems Change: Cohort 7 kicks off tomorrow -- be the first to hear about it.

Facilitators’ Workshop: We made a whole session about your most exhausting colleague — learn how to navigate them.

Untangled Collective: Turns out the most powerful person in the room is often a spreadsheet or an algorithm. This session teaches you to map its power — and everything else quietly running the show.

🧶 Deep Dive

‘Data driven’ organizations are the least prepared for AI

‘Data driven’ organizations are best positioned to capture value in an age of AI — that’s the predominant story anyway. If you’ve been building proprietary data sets, you can create contextual AI tools. If you’ve been using data to drive decisions, you’re set up to automate key tasks.

Maybe.

But I think the opposite is just as plausible: ‘data driven’ organizations might be the least prepared for what AI actually exposes.

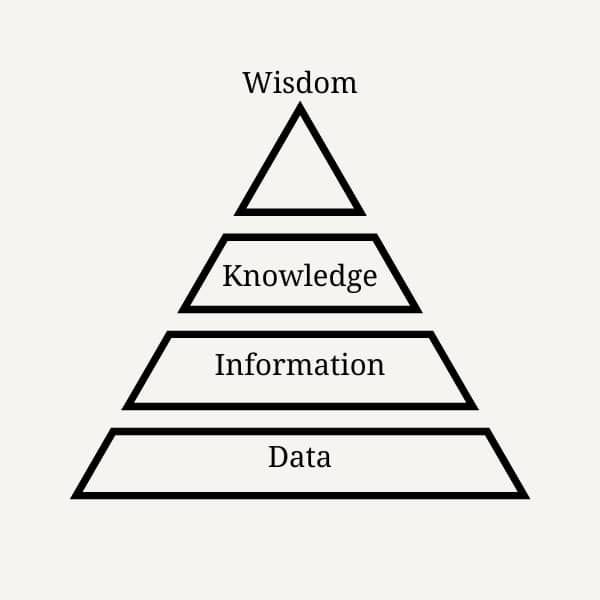

The reason why starts with a silly pyramid of false premises, known as ‘DIKW’ — data > information > knowledge > wisdom.

In this framework, data is ground truth. Add context and you get information, which morphs into knowledge when someone understands it. Wisdom sits at the top, as the ability to apply judgement and experience to knowledge.

This pyramid has always been wrong, and it has led organizations astray. Data aren’t a neutral reflection of reality — they are made. Someone decided what to measure and what to leave out. What counts as a relevant question. What counts as legitimate evidence. What gets surfaced in a dashboard and what gets buried. As Donna Haraway put it, all knowledge is knowledge from somewhere. The point isn’t that data is useless. It’s that data doesn’t output The Truth — it outputs partial perspectives that carry the assumptions of whoever built the system. The somewhere doesn’t go away just because we stopped talking about it.

Moreover, information isn’t simply data plus context. There has been a longstanding project to separate information from individual interpretation and meaning making. Claude Shannon, the “father of information theory,” actually excluded the conscious subject altogether in his definition. He simply lopped off semantic meaning and said all languages could be understood by their syntax or form. Why? Because that allowed Shannon to claim that language could be manipulated in mathematical terms. Just patterns and probabilities, no semantic meaning to see here! As Megan O’Gieblyn recounts in God, Human, Animal, Machine, Shannon knew that “messages have meaning,” but he considered this “irrelevant to the engineering problem.” Fair enough — for an engineering problem. The trouble came later, I suppose, when people applied that bracket to cognition, organizations, and knowledge itself. If only he could have given a heads up to those building entire organizations on a false premise!

Data-driven organizations have been making that same mistake ever since — treating information as if it contains meaning on its own, rather than acquiring meaning through interpretation. And as strategist Vaughn Tan puts it, meaning-making is precisely what’s at stake:

What makes us human is our ability to do things which are not-yet-understood, which require us to be able to create meaning where there wasn’t meaning before.

In treating information as inherently meaningful, these organizations transfer power away from the people who make meaning, toward the systems that process symbols. They’ve devalued the conscious subject, the meaning-maker. The person for whom information is actually information — because it means something!

Which brings us to what the DIKW pyramid most badly misunderstands: knowledge. Knowledge is contextual. It is embodied. As Dave Snowden argues, “We always know more than we can say and we will always say more than we can write down.” Right, most of what we know remains unconscious until circumstances — surprise, confusion, deliberate reflection — require us to bring it into explicit awareness.

As I argued in an essay about abduction and AI, this implicit knowledge base is unbelievably large in ordinary people, and its critical for developing educated guesses, gut feelings, and hunches. This is the nurse’s ‘feeling’ at the bedside. The experienced practitioner’s instinct that something is off before they can say why. The team’s accumulated understanding of how decisions actually get made versus how they’re supposed to get made.

It develops through practice, observation, and being wrong in particular situations over time. It exists in bodies, in trusted relationships, in the narrative of shared experience. It cannot be translated into a training dataset, a model, a prompt — without losing what makes it useful.

This is what data-driven organizations have been systematically undervaluing. And it’s what AI systems, by design, cannot replicate. The knowledge simply isn’t in the text. But ‘data driven’ organizations have tricked themselves into believing that it is. Paradoxically, the organizations most excited by AI are those who have made themselves most susceptible to it.

If you’ve told your board and staff that wisdom and knowledge live in the data themselves — that the numbers speak for themselves, that the dashboard is the truth — then you’ve already done the work of making yourself vulnerable. You’ve delegitimized the judgment of the people who actually hold the organization’s knowledge. You’ve built a culture that treats intuition as bias and context as noise. And now a technology arrives that does exactly what you’ve been doing, only faster and cheaper.

The knowledge that actually runs organizations was never in the data. It was in the people who knew which numbers to trust and which to ignore. The ones who could read a room, remember what failed three years ago, and sense when something was off before they could say why. That knowledge doesn’t live in any IT system. It accumulates through time, friction, and relationship — and it walks out the door when the people do.

The question isn’t whether your organization is ready for AI. It’s whether you remember what you already knew before you decided data was enough.

🚨 Stewarding AI

Most organizational approaches ask: what can AI do or what can we automate? STEWARD asks: what kind of organization do we become when we use it? Because ‘AI’ is not a tool to deploy. It’s a set of relationships to steward. Be the first to hear when my new course -- Stewarding AI: How to Build Responsible Principles, Workflows, and Practices -- launches.

💫 Work With Me

Here are 4 ways I can help:

Facilitation: I can help facilitate your team through complex and fraught dynamics, so that they can achieve their purpose.

Advising: I can help you navigate uncertainty, make sense of AI, and facilitate change in your system.

Organizational Training: Everything you and your team need to cut through the tech-hype and implement strategies that catalyze true systems change. (For either Stewarding AI or Systems Change for Tech & Society Leaders)

1:1 Leadership Coaching: I can help you facilitate change — in yourself, your organization, and the system you work within.

Okay, that’s it for now.

Charley

Mic drop. All of it.

Great read. Whoever decides what gets measured is the real power move.